The AI Illusion: Your Next Big Breakthrough is Actually Just Good Engineering

Every engineering leader today feels the pressure. Every week, a new tech giant pulls back the curtain on a seemingly magical AI tool.

Spotify unveils Honk, an agentic platform that effortlessly updates hundreds of repositories in the background. “Spotify's top developers haven't manually written code since December 2025”.

Rippling announces Rippling AI, promising users the ability to “Ask anything. Do everything,” claiming the AI flawlessly executes complex, data-heavy tasks.

The industry narrative is incredibly seductive: just plug an AI model into your codebase, and watch your productivity skyrocket.

But there is an uncomfortable truth that many leaders are ignoring, often because it lacks the sex appeal of an AI breakthrough: In almost every successful enterprise case, AI is just a thin layer of orchestration sitting on top of years of rigorous, foundational engineering practices.

The Reality of the Language Model

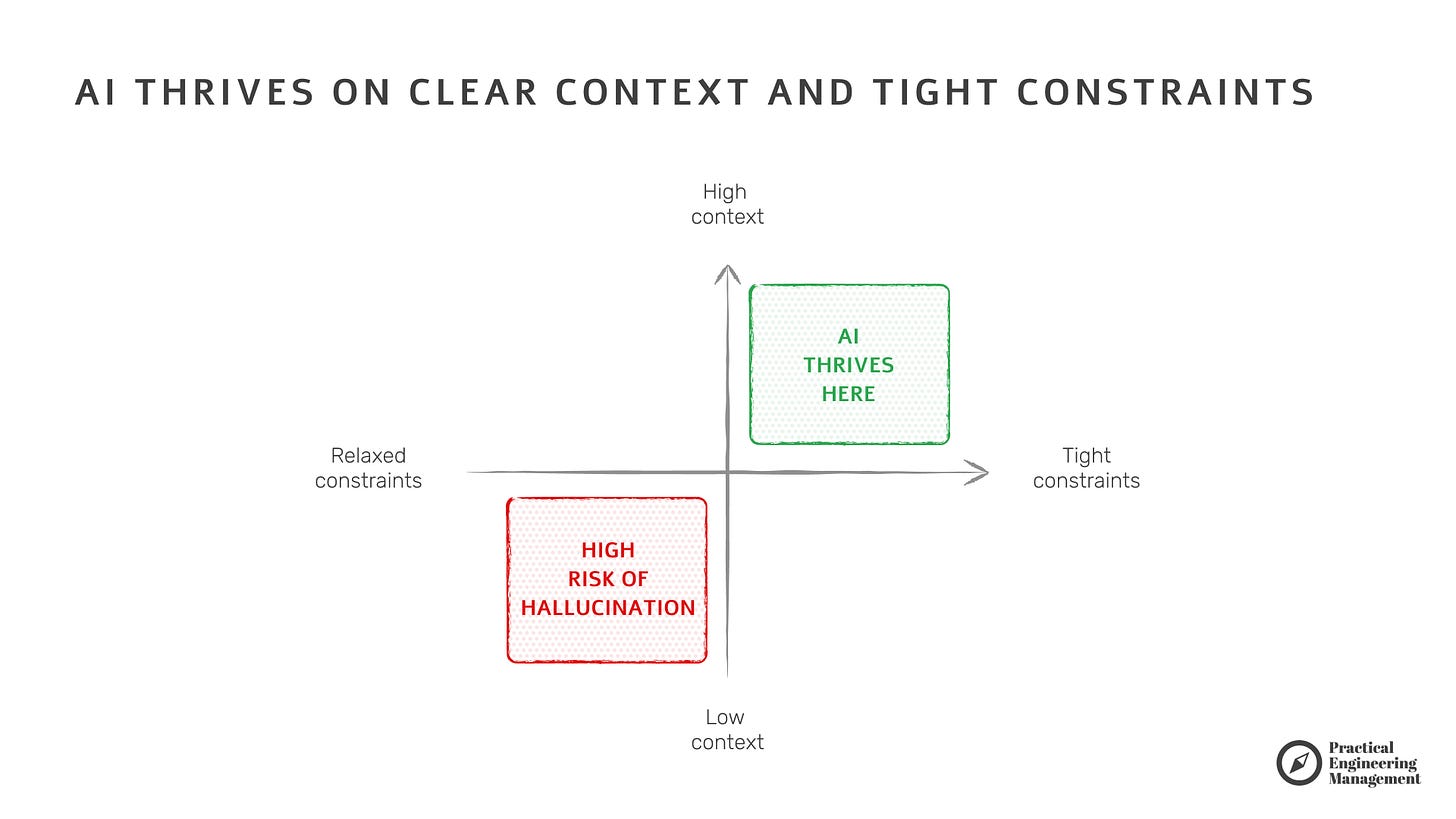

When we strip away the marketing, most of today’s AI boils down to Large Language Models (LLMs). LLMs are not sentient problem solvers; they are highly advanced pattern matchers and text generators. And what do pattern matchers need to function effectively?

Structured data, rich context, and extensive, immediate feedback.

If you drop an LLM into a messy, fragmented architecture with outdated documentation and flaky CI/CD pipelines, you will not get magic. You will get confident hallucinations and a scaling disaster. The companies that are succeeding with AI aren’t doing so because they have a better LLM; they are succeeding because their underlying engineering environment speaks a language the LLM can understand.

The Unglamorous Foundation of “AI Readiness”

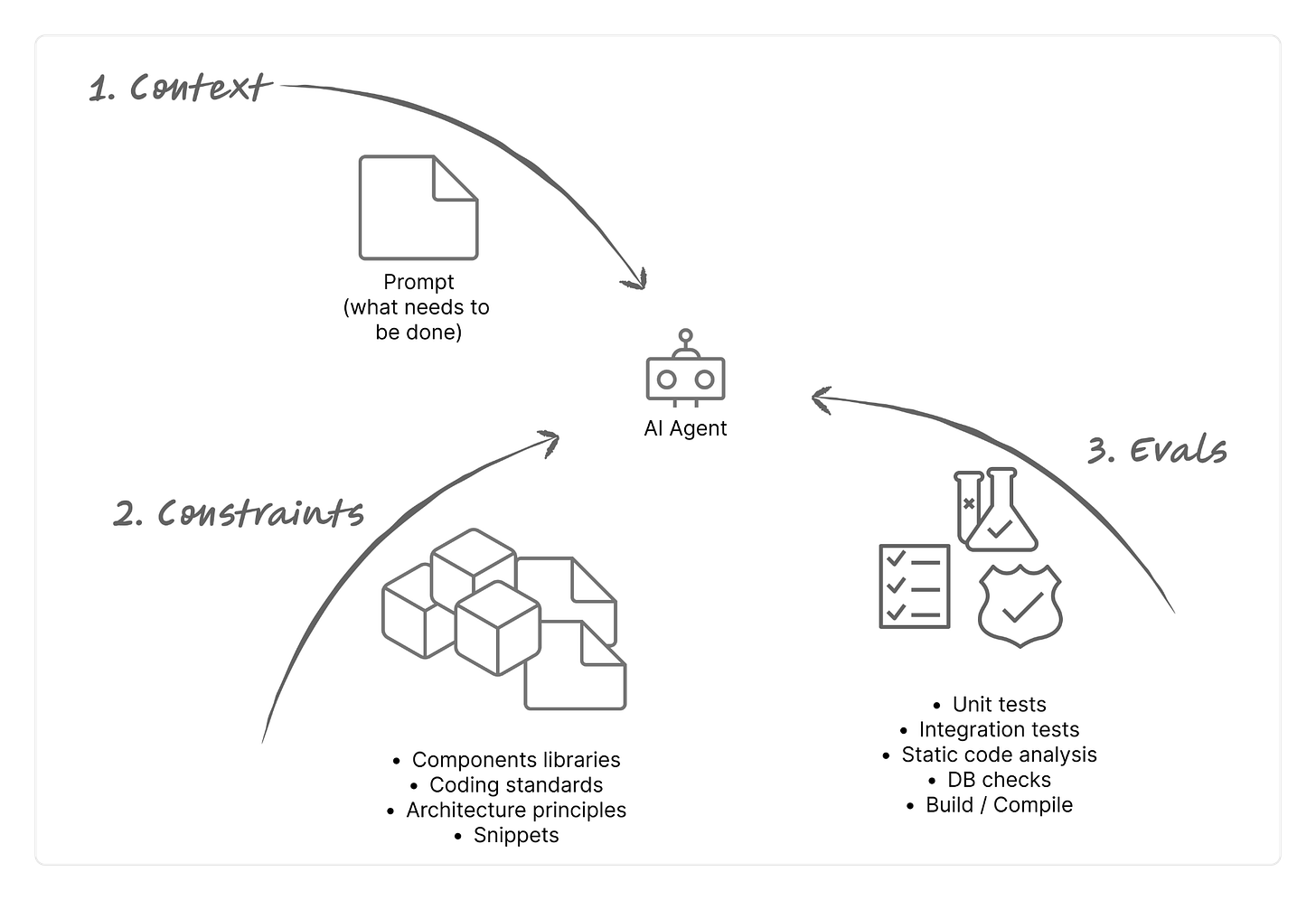

What does this foundation actually look like? At its core, it is the mastery of the basics we’ve been preaching for the last decade. To build systems like Honk or Rippling AI, you first need:

A Consistent Tech Stack: Uniformity across the organization reduces engineers' cognitive load, but it is an absolute necessity for an AI agent navigating multiple repositories without breaking things.

Immaculate Cataloging: Tools like Spotify’s Backstage (a unified software catalog) and Infrastructure as Code ensure that ownership, lifecycle, and dependencies are mapped and machine-readable.

Documentation as Code: OpenAPI specs, AsyncAPI, and Architecture Decision Records (ADRs) or PRDs are no longer just for human onboarding; they are the exact context windows your LLMs need to generate accurate code.

Ruthless Quality Checks: A layered, automated testing strategy—fast linting, unit tests, integration tests - everything what brings back a technical feedback about code validity will be priceless for AI iterations.

Immediate CI/CD Feedback: If your build takes hours, AI agents will wait hours. AI needs deterministic feedback within minutes to correct its own mistakes (this is the core of Spotify’s “Verification Loop,” which makes their non-deterministic AI predictable and safe).

Structured Observability: Logs, traces, and metrics must be structured and easily consumed by machines, not just visualized on dashboards for humans.

Dissecting the “AI Writes 80% of Our Code” Myth

When a company boasts that AI writes the vast majority of its code, it pays to look under the hood.

In many cases, the heavy lifting isn’t being done by a neural network dreaming up novel solutions. It is being done by countless, single-purpose, highly predictable scripts that engineers wrote years ago. These scripts are doing the grunt work: automatically bumping dependency versions, committing fixes for known security vulnerabilities, and resolving predictable code smells based on linter output.

Consider Spotify. Before they had Honk (the AI brain), they built Fleetshift (the deterministic muscle). Fleetshift is the infrastructure that knows how to clone tens of repos, run scripts, manage branches, open PRs, and auto-merge. Honk is simply the LLM layer that translates a natural language prompt into instructions for Fleetshift. Without Fleetshift, Honk is just a chatbot.

Similarly, Rippling’s AI magic is built directly on top of their meticulously engineered real-time reporting engine—a deterministic data architecture designed long before generative AI was a buzzword.

Even when AI is writing net-new code, it is heavily constrained. It is guided by Swaggers, internal ADRs, and high-quality templates. The “AI Skills” we marvel at are often nothing more than standard engineering best practices combined into a masterfully crafted system prompt that radically narrows the model’s focus.

The Takeaway for Leaders

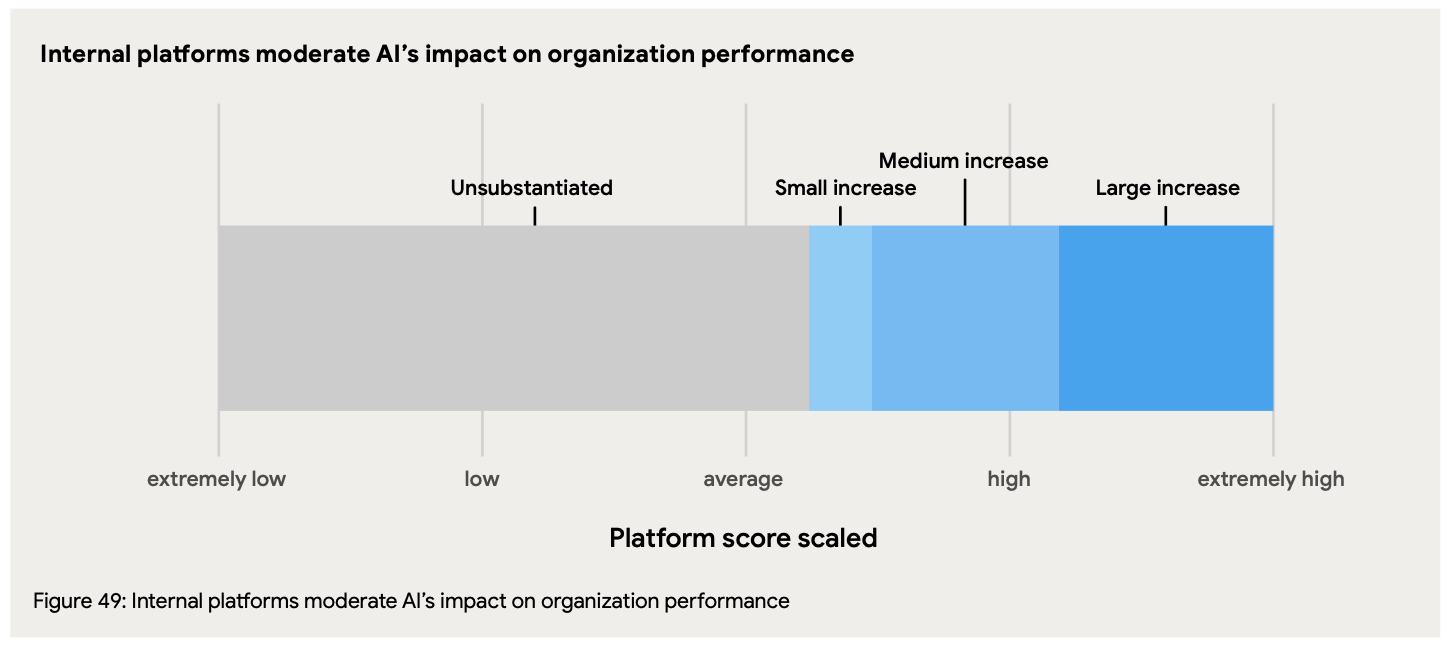

The lesson here is not that AI is overhyped, but that its prerequisites are severely undervalued. AI is the ultimate force multiplier, but multiplying by zero still gets you zero.

If you want agentic coding, automated migrations, and intelligent data retrieval, stop asking your teams how quickly they can integrate an LLM.

Instead, ask them about the state of your service catalog, the speed of your CI/CD feedback loops, and the consistency of your documentation.

The companies winning the AI race today aren’t the ones who pivoted to AI the fastest. They are the ones whose long-term investments in unglamorous engineering excellence are finally paying off.