The AI Amplifying Capabilities

Move Beyond Tools

If you are leading an engineering organization today, you don’t need another pitch about the promise of AI. You already know the tools are out there.

Your job is no longer about whether we adopt AI, but about how we actually get a return on our investment without destabilizing our systems. Especially when C-level has been pushing you hard on this transformation, we need to stop looking at AI as a magic wand and start treating it for what it truly is: an amplifier.

What Are AI Capabilities?

The greatest returns from AI do not come from the tools themselves, but from investing in the foundational technical and cultural environment that surrounds them.

The DORA team recently introduced the AI Capabilities Model. It is to establish a critical truth: AI magnifies the strengths of high-performing organizations but also rapidly accelerates the dysfunctions of struggling ones.

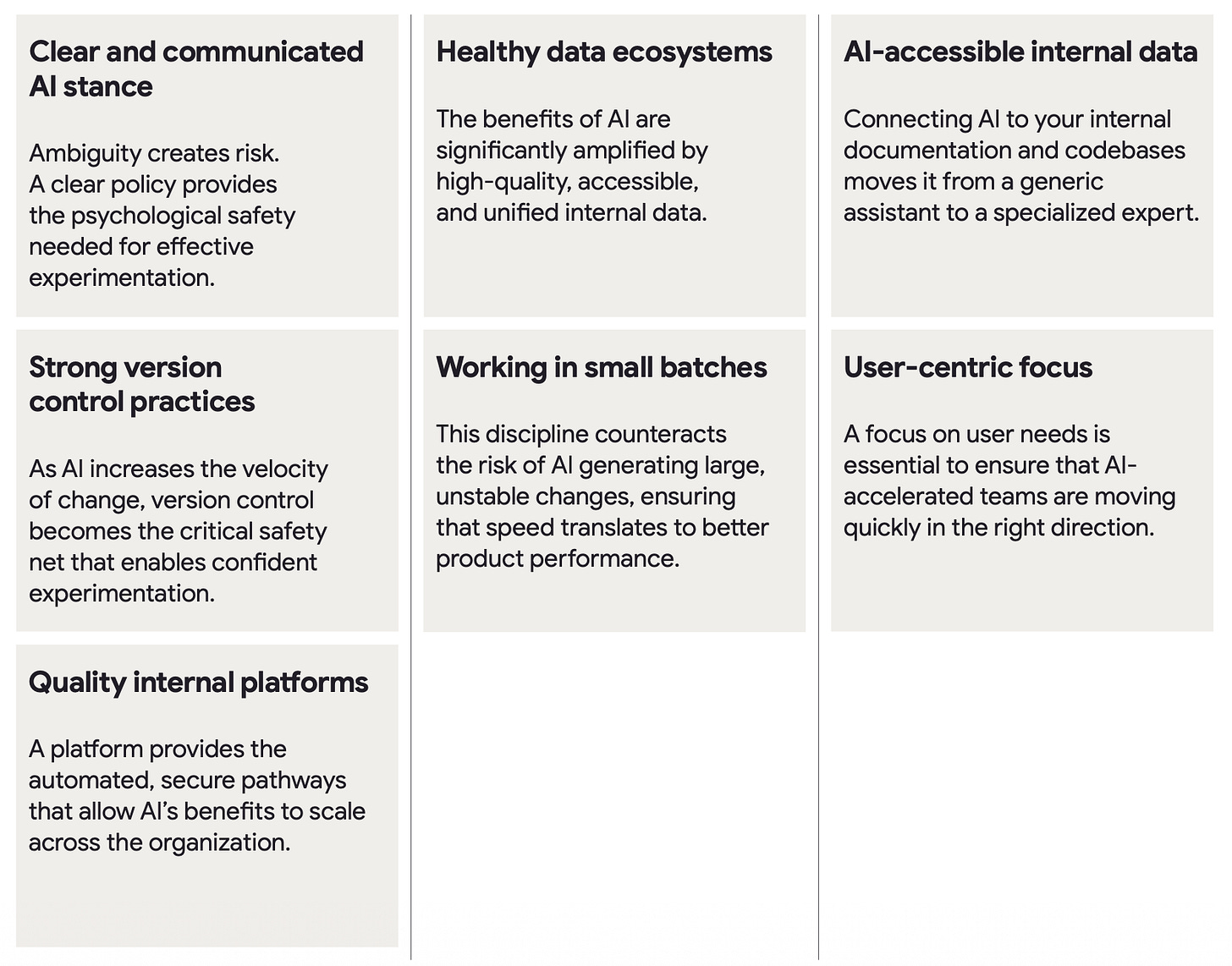

Here are seven foundational capabilities that serve as the environment where AI can thrive:

Clear and communicated AI stance

Healthy data ecosystems

AI-accessible internal data

Strong version control practices

Working in small batches

User-centric focus

Quality internal platforms

If your underlying data is a mess, AI will confidently generate messy, hallucinated outputs faster than ever. If your deployment pipelines are bottlenecked, AI will just help your team write code that piles up at the bottleneck.

When we introduced AI tools for the first time, we conducted research into how they're being used. What we noticed - engineers seen as low performers were complaining about the generated code. Codebases with low quality standards generated flaky tests or tangled code that did the job somehow, but no one understood full how it worked. The result? Strong engineers spent much more time reviewing and often hand-fixing the created PRs.

The Empirical Data Behind the Model

AI Capabilities Model is built on empirical data - based on over 100 hours of qualitative interviews and survey responses, we know that roughly 90% of professionals are using AI in their work.

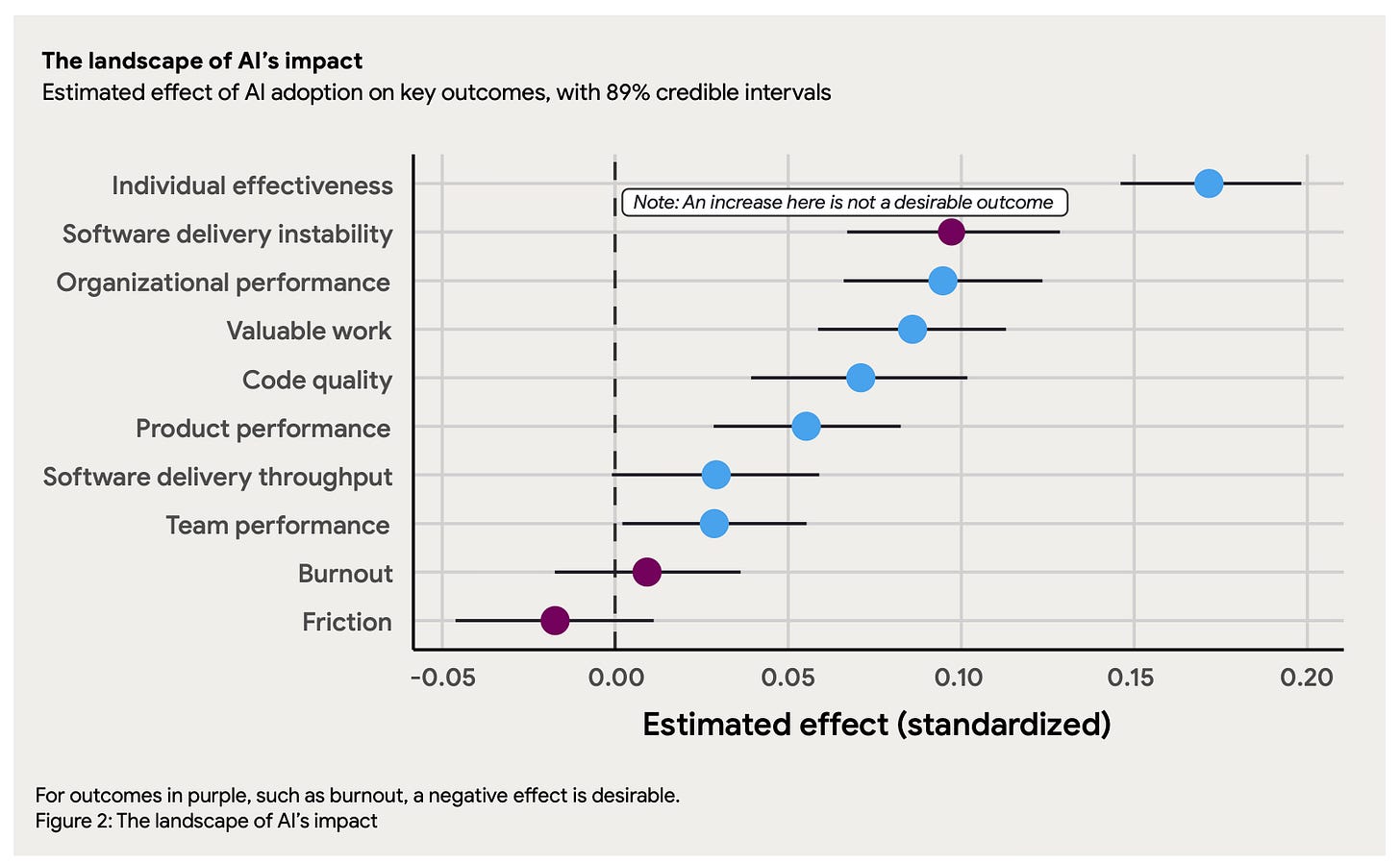

So yeah, it’s not an AI adoption anymore - it’s finding the way to amplify AI. But not everyone experiences the same outcomes. The data shows exactly how the presence (or absence) of these capabilities impacts key organizational metrics:

Individual Effectiveness & Code Quality: When AI is securely connected to internal data (context engineering), it transitions from a generic assistant to a specialized expert, significantly amplifying individual effectiveness and code quality.

Organizational Performance: The positive impact of AI on overall organizational performance depends heavily on a high-quality internal platform and a healthy data ecosystem. Without them, localized productivity gains are swallowed by downstream bottlenecks.

Product Performance & Friction: AI can generate large amounts of code quickly, potentially increasing instability in software delivery. However, enforcing the discipline of working in small batches turns AI’s effect on friction into a net positive and amplifies product performance.

Team Performance: A team with poor user focus that adopts AI is likely to see its performance decrease—they just build the wrong things faster. Conversely, high user-centricity coupled with AI drastically increases team performance.

Ironically, the AI revolution can be dangerous for the company. Even if we master delivery practices - small batches of change, quick CI/CD, rapid delivery, the key is to ensure we build the right things in the first place.

Why?

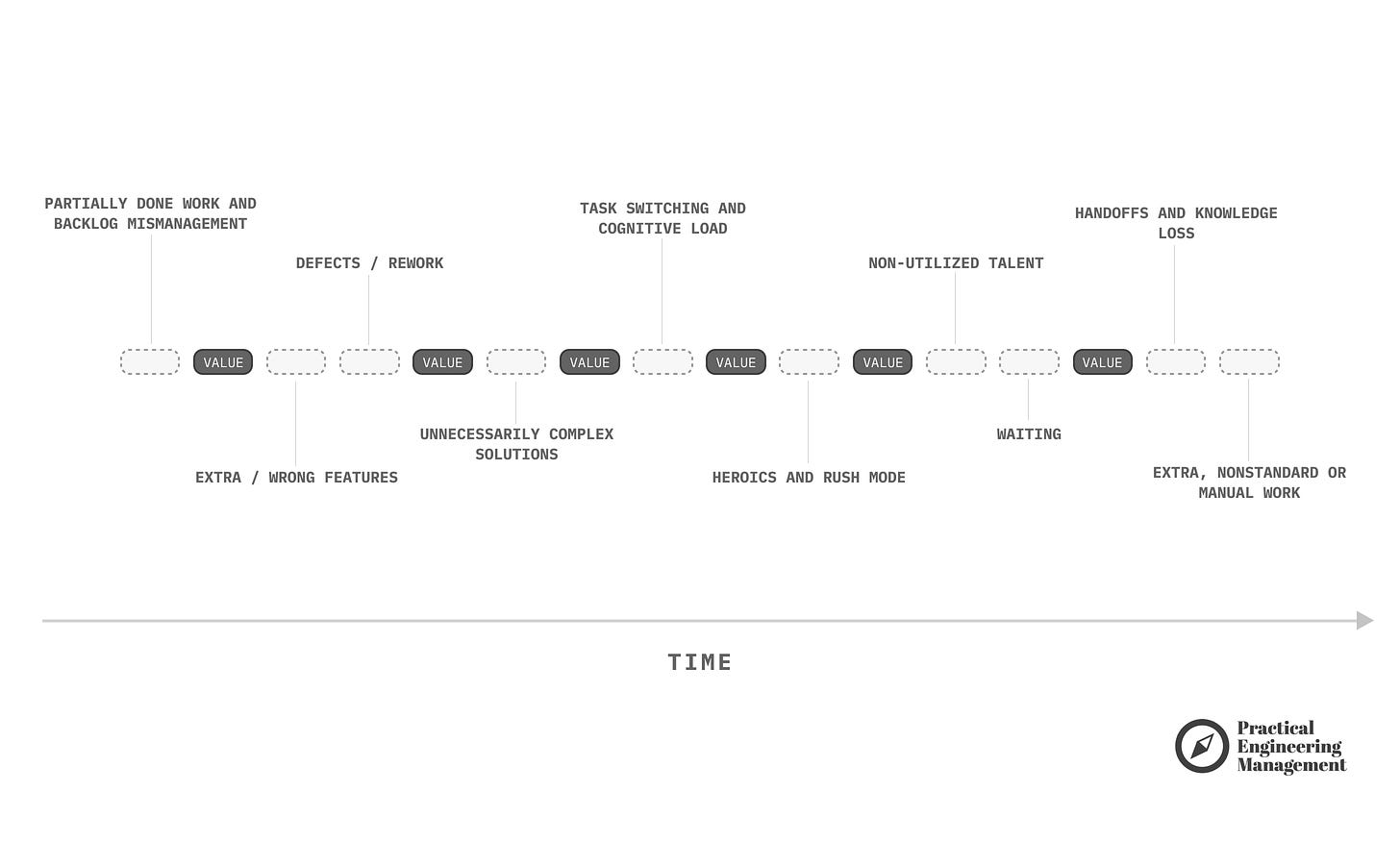

Every feature we produce requires ongoing maintenance, which increases the platform's complexity. “Extra/Wrong Features” is one type of software engineering waste (read more: Ten Types of Software Engineering Waste).

In his books, Marty Cagan states that only 10-30 percent of the features companies push bring positive results. And this was before an “AI revolution”. We are still waiting for more data about how much “waste” we produce today.

Practical Steps to Build Each Capability

Transforming these findings into daily engineering reality requires intentional leadership. Here are some practical steps you can take to foster each of the seven capabilities within your organization.

1. Clarify and Socialize Your AI Stance

Ambiguity creates risk and stifles innovation. Developers need to know they are operating within approved boundaries.

We cannot keep our teams in the cave anymore and forbid the use of AI. They will find a way anyway (I met engineers who brought the 2nd laptop and did agentic work on their personal machines when their corporate environment blocked Claude or Copilot).

On the other hand, we cannot just let them use whatever they want without supervision. The data leakage risk is at the highest level ever (just read the news, e.g. on the latest leakage of Lovable platform).

As a starter for building good policies, use the three-bucket approach: Clearly categorize use cases into “Prohibited” (e.g., inputting PII into public models), “Permitted with guardrails” (e.g., using proprietary code with approved enterprise tools), and “Allowed” (e.g., boilerplate generation).

Host this as a living document in your internal developer portal and establish a feedback loop for developers to ask questions.

2. Cultivate a Healthy Data Ecosystem

Data is not a by-product; it is a strategic asset today.

Invest in data governance: Establish clear owners and stewards for critical data domains so accountability is crystal clear.

Prioritize a single source of truth: Consolidate or federate siloed data to create a unified view.

Document locally: Have teams document key datasets in application

README.mdfiles, treating metadata as a versioned code artifact.

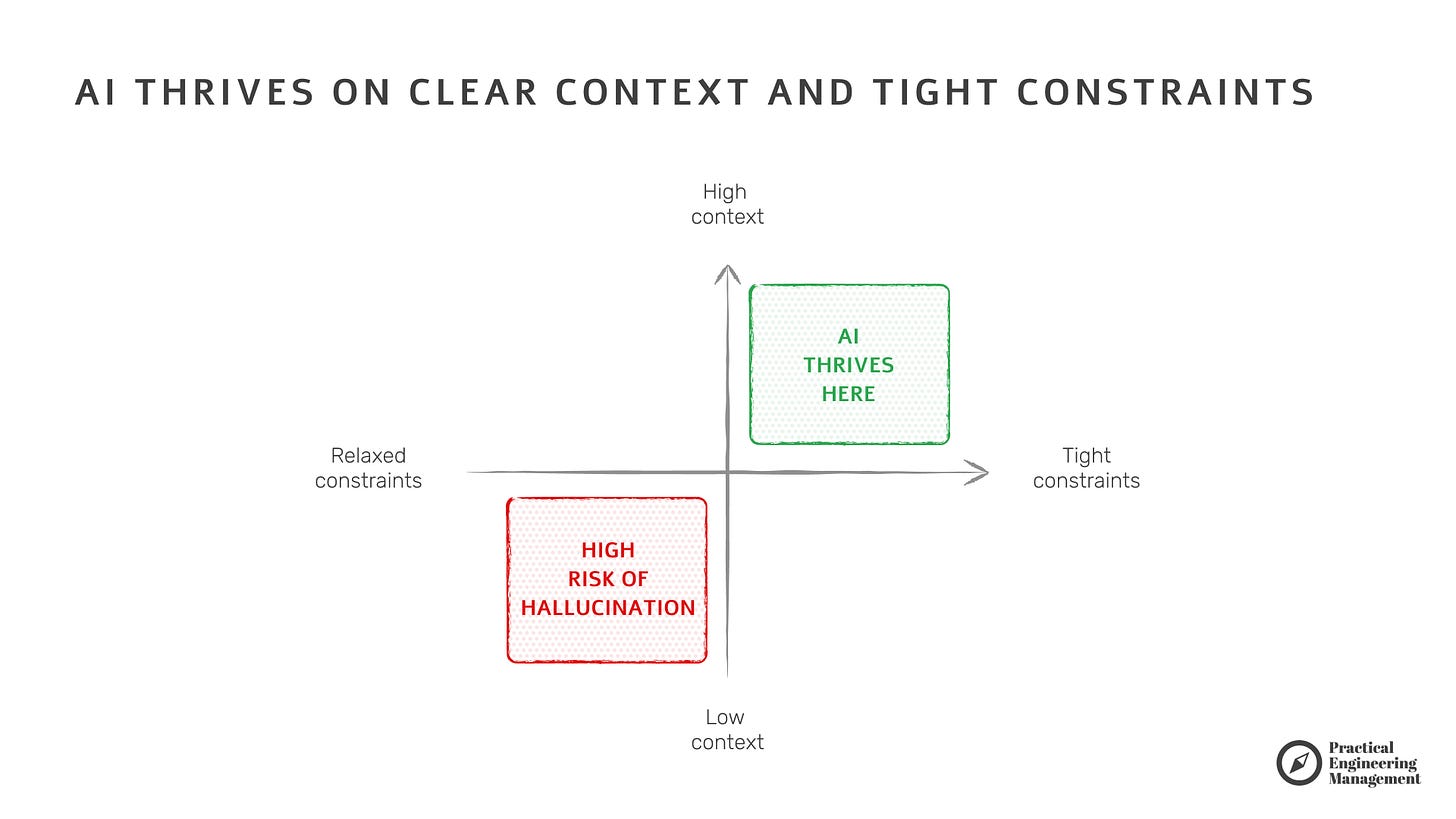

Garbage in, garbage out. If we don’t provide data, AI hallucinates. But if we provide messy data, the AI becomes extremely certain… about wrong things.

Good practice for today is to start thinking that data is not for humans in the first place, but for LLMs that do not necessarily reason like us.

Read more: AI Thrives on Clear Context and Tight Constraints.

3. Make Internal Data AI-Accessible (Context Engineering)

Connecting AI to your internal systems unlocks true value, moving beyond “prompt engineering” to “context engineering”.

Curate your code context: Do not index all repositories. Only index branches that represent your organization’s gold standard to prevent AI from learning your technical debt.

Focus on relevance, not volume: Use Retrieval-Augmented Generation (RAG) or a Model Context Protocol (MCP) server to feed AI only the specific context it needs, reducing hallucinations.

Layer your security: Ensure retrieval mechanisms operate with the user’s own credentials to maintain strict access controls.

In past months, I’ve been observing new challenges in this field. Non-technical people (PMs, Designers, Ops) vibe code their own apps. Without engineers’ help, they create scripts that access various 3rd-party APIs, such as fetching data from SAP/Salesforce, processing it, and sending it via internal notification channels (email systems, Slack, etc.). Overall, this is an amazing thing to see, but it also brings many risks, like API keys leakage and unauthorized operations (it’s ok if they retrieve data, but when they start modifying or deleting it, this becomes dangerous).

Not to mention the low observability of such direct integrations or the maintenance of these solutions over an extended period, as consumed APIs will change.

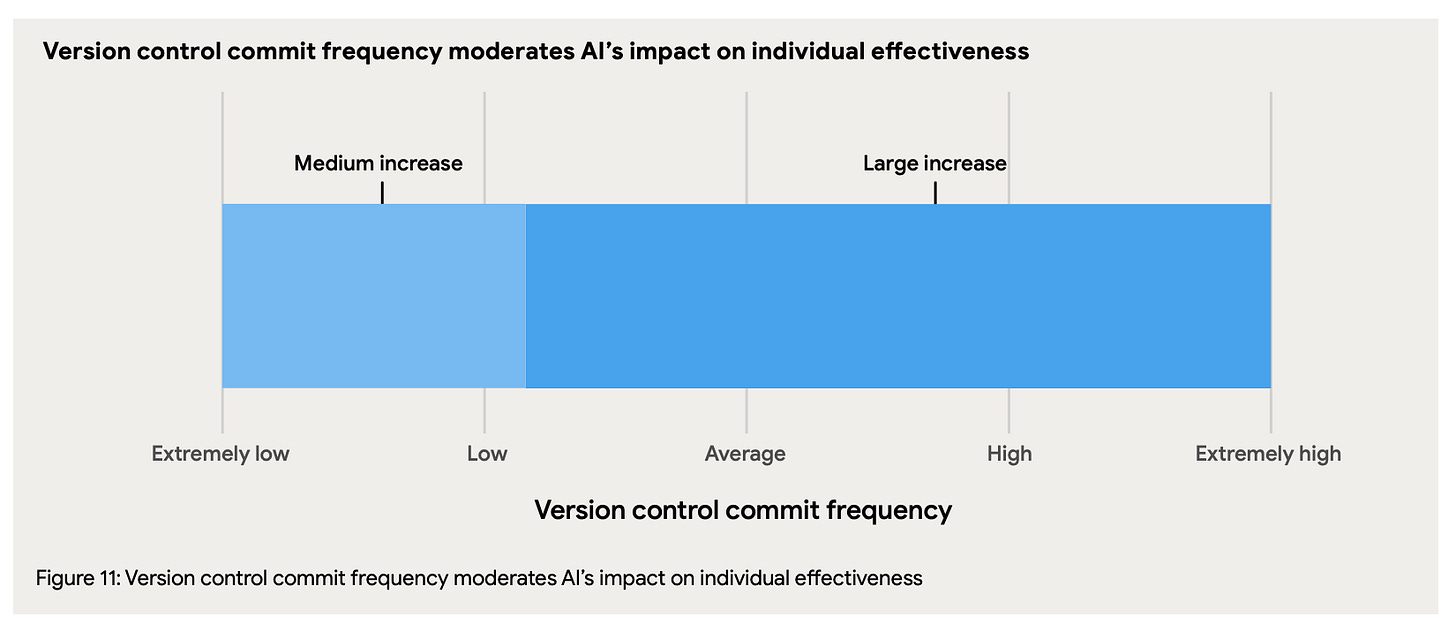

4. Fortify Strong Version Control Practices

Because AI accelerates code generation, version control is your ultimate safety net.

Version everything: This includes application code, test scripts, AI prompts, and AI agent configuration files (like

GEMINI.md).Make small, frequent commits: Bring outer-loop discipline to the inner loop so undesirable AI changes can be easily reverted.

Align on AI commit philosophy: As a team, explicitly agree whether AI can commit directly or if a human must review and commit locally first.

The discipline of making small, frequent commits and relying on rollback capabilities allows teams to confidently manage and experiment with AI-generated code.

This isn’t a new thing. It is the core of DORA research over the years. Good version control practices help reduce the time required to recover from failed deployments. It enables the easy recreation of environments for troubleshooting. It also creates a crucial psychological safety that allows engineers to experiment, knowing they can easily revert to a stable state.

5. Enforce Working in Small Batches

The true Product Operating Model relies on fundamentally changing the way things are built through frequent, small releases (I covered it in: The Role of Engineering in Product Model Transformation - Changing the Way Product Is Built). With AI generated code this becomes even more fundamental principle. On one end we want to avoid producing overengineered features no one wants to use. On the other side we don’t want to overwhelm cusomers with constant changes.

A few ideas to consider:

Strive for continuous delivery: Elite performers deploy on demand with a change lead time of less than one day. If continuous delivery is currently out of reach, organizations should aim to release at least once every two weeks.

Throttle releases with feature flags: Pushing changes daily can create too much cognitive load for customers. Feature flags allow you to control the release of features dynamically without deploying new code. They enable gradual rollouts, A/B testing, and quick rollbacks if an AI-generated change introduces instability.

Use early access and user segmentation: Deploy new changes to a small subset of users before a broader rollout. This limits the impact of possible incidents and helps collect early user feedback on new functionality.

Working in small batches is also great way to keep the motivation high in your team. For the most of my engineering leadership career I implement the rule of 1-week onboarding: engineers who join my teams are expected to deliver first change to production within the first 7 days. There is nothing more rewarding for builders than seeing their work being used by customers.

6. Center the User in Product Strategy

Before AI, developers crafted much of code by hand, naturally weaving in their human intuition about what the user needed. Today, AI generates massive amounts of that code, but the AI is completely blind to your users. It does not know their pain points, their goals, or their workflows.

Therefore, we must step in as the user’s proxy. If a team lacks this user-centric focus, adopting AI will just help them build the wrong things faster, ultimately harming team performance.

Integrate low-latency feedback loops: AI models must be provided with precise, current end-user context. Create channels for immediate feedback, such as in-app micro-surveys right after a user completes a critical task, so insights can be immediately used to refine AI prompts and feature development.

Make user metrics as visible as technical metrics: A team’s focus follows what it measures. Display user experience metrics—like task completion rates, customer satisfaction (CSAT), or time-on-task—prominently in developer tools and team meetings so developers and their AI tools are grounded in the impact they are having on the user.

Involve engineering directly in user research: Distilled research findings can strip away necessary nuance. Invite developers to observe user testing sessions firsthand. Seeing a user struggle builds the deep empathy required to write the specific prompts and tests that ensure AI serves the user’s actual needs.

One of the most effective framework that helped me to become more focused on users is the Problem Solving Framework.

7. Invest in Quality Internal Platforms and Built-In Observability

Your internal platform acts as the essential distribution and governance layer for AI, ensuring that individual productivity gains aren't swallowed by downstream bottlenecks.

However, a world-class platform must also provide the safety nets necessary for a true product operating model, ensuring teams can detect, diagnose, and resolve issues efficiently as their release velocity increases (read more about: Amplification).

Adopt a product mindset: Treat your internal developers as your customers. Assign a product manager to own the Developer Experience (DevEx) and build “golden paths” that solve actual developer pain points rather than dictating rigid, “ivory tower” architectures.

Shift cognitive load down: Abstract away underlying complexity (like Kubernetes, security policies, or infrastructure provisioning) into the platform so product engineers can focus entirely on solving customer problems.

Bake in default observability: AI-assisted development and frequent, small releases naturally raise stability risks that cannot be mitigated by testing alone. The platform should automatically provision key observability pillars—Metrics, Logs, Traces, Alerts, and Service Level Objectives (SLOs)—for every new service using standardized tools.

Start with a minimum viable platform: Find the most painful developer journey (e.g., scaffolding a new service), build a golden path for it, and iterate based on real feedback instead of attempting a “big bang” release.

End Words

AI will not fix broken engineering cultures, but it will dramatically accelerate healthy ones. By assessing your teams against these seven capabilities today, you can stop chasing the hype and start building a resilient, high-performing engineering organization for tomorrow.